Launch AI: Building Systems That Drive Revenue in 2026

Learn how to launch AI systems effectively for B2B sales teams. Discover strategies, frameworks, and real-world approaches that generate results.

published

The decision to launch AI capabilities within your sales organization represents more than a technology upgrade. It's a fundamental shift in how your team operates, qualifies leads, and closes deals. Yet most B2B companies approach this transformation with either unrealistic expectations or paralyzing caution. They either bolt AI onto broken processes expecting magic, or they wait indefinitely for the "perfect moment" that never arrives. The reality sits somewhere between these extremes: successful AI adoption requires intentional planning, realistic scoping, and a clear understanding of where automation actually moves the needle versus where human judgment remains irreplaceable.

Understanding the Foundation Before You Launch AI

Too many sales leaders skip the diagnostic phase entirely. They read about competitors implementing chatbots or automated outreach and immediately start shopping for solutions. This approach consistently fails because AI amplifies existing patterns. If your current sales process leaks opportunities, adding AI will make you lose them faster and at greater scale.

Before investing a dollar in new technology, you need baseline clarity on three critical dimensions: what's actually working in your current system, where time and deals disappear, and which activities generate the highest return per hour invested. Without this foundation, you're essentially asking AI to optimize chaos.

Mapping Your Current Sales Reality

Start by documenting every tool your team currently uses, not what they're supposed to use according to policy. Sales reps are resourceful. They've built workarounds, created shadow systems, and found ways to route around obstacles. These adaptations contain valuable intelligence about where your official process fails.

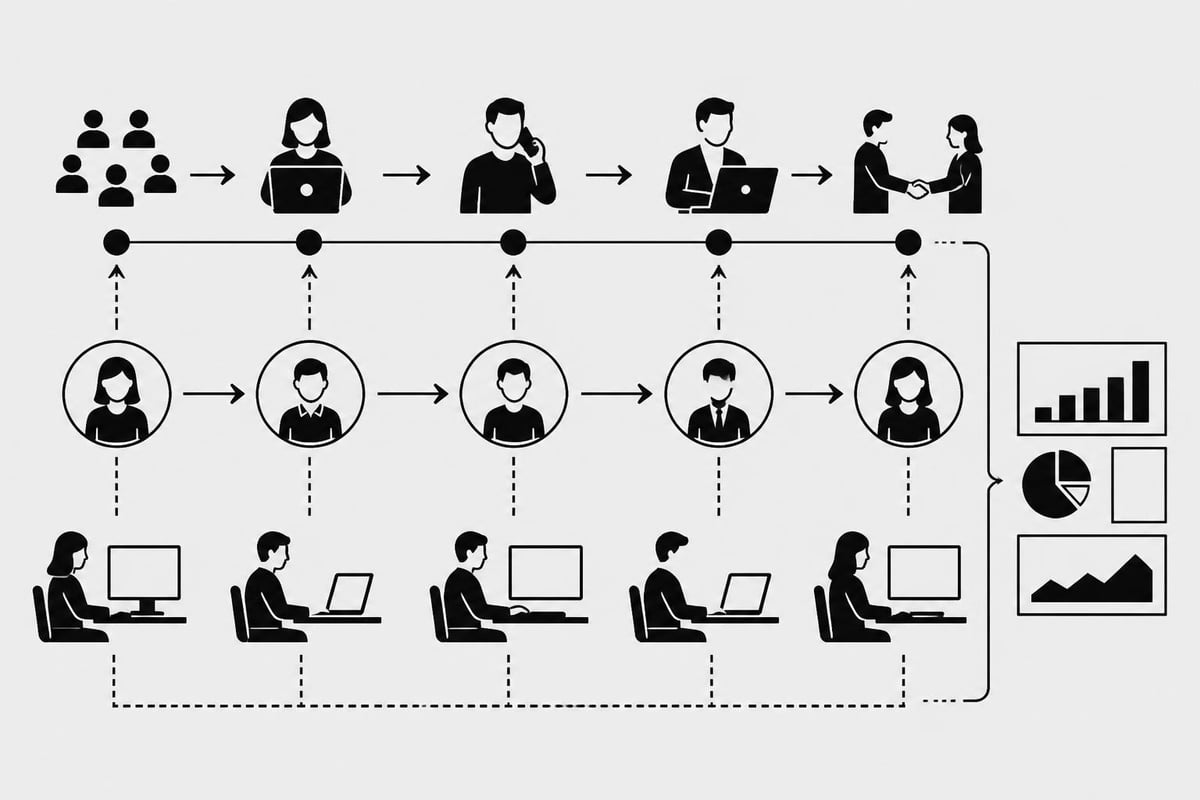

Create a simple workflow map that tracks:

How leads enter your system and through which channels

Every handoff point between marketing, SDRs, account executives, and customer success

Where data gets manually entered, copied, or reformatted

Which reports actually get used versus which ones exist to satisfy executives

This documentation reveals the gap between designed process and lived reality. That gap is where you'll find your highest-impact opportunities when you launch AI solutions.

Identifying High-Impact Automation Targets

Not all sales activities deliver equal value when automated. Some tasks benefit tremendously from AI assistance. Others require human judgment, relationship intelligence, or strategic thinking that current AI cannot replicate effectively.

High-value automation targets typically share specific characteristics. They're repetitive, data-intensive, rule-based, or time-sensitive. They drain energy from your best performers and create bottlenecks during peak activity periods.

Consider these common high-impact areas:

Lead qualification and scoring based on behavioral signals, firmographic data, and engagement patterns

Meeting preparation that pulls relevant context from multiple systems and formats it for quick consumption

Follow-up sequencing that adapts based on prospect behavior rather than following rigid timelines

Data enrichment that keeps contact records current without manual research

Pipeline forecasting that identifies at-risk deals before they stall

Contrast these with activities where AI often disappoints: building genuine executive relationships, navigating complex political dynamics within prospect organizations, crafting highly customized proposals that address unique business challenges, or negotiating contract terms that balance competing interests.

Strategic Planning for Your AI Launch

Once you understand your current state and high-impact opportunities, the planning phase determines whether your AI initiative delivers measurable ROI or becomes another abandoned project. Strategic planning means defining success metrics before selecting solutions, not retrofitting justifications after purchase.

Setting Realistic Success Metrics

Effective metrics connect AI implementation directly to revenue outcomes or efficiency gains. Vanity metrics like "number of emails automated" or "AI tasks created" mean nothing if deals aren't closing faster or reps aren't engaging more qualified prospects.

Metric Category | Weak Indicator | Strong Indicator |

|---|---|---|

Efficiency | Tasks automated | Hours saved per rep per week |

Quality | AI suggestions generated | Percentage of AI suggestions accepted |

Revenue Impact | Leads processed | Conversion rate improvement by stage |

Adoption | Features enabled | Daily active usage by role |

Your metrics should reflect your specific bottlenecks. If pipeline velocity is your constraint, measure time-to-close and stage progression speed. If lead volume overwhelms your team, track qualification accuracy and cost-per-qualified-lead. Generic dashboards produce generic results.

Choosing Between Build and Buy

The build-versus-buy decision carries significant implications for how you launch AI capabilities. Off-the-shelf platforms offer faster deployment and established support structures. Custom solutions deliver precise alignment with your unique workflows and competitive advantages.

Most successful implementations combine both approaches strategically. They use proven platforms for common functions like email sequencing or calendar management while building custom AI for proprietary processes that differentiate their sales motion. Custom-built AI solutions address the specific gaps that generic tools leave unresolved, particularly in complex B2B environments.

Platform advantages:

Faster initial deployment

Established vendor support

Regular feature updates

Lower upfront investment

Custom build advantages:

Exact workflow alignment

Proprietary competitive advantage

Full control over roadmap

Deep integration possibilities

The right choice depends on your sales complexity, technical capabilities, and how much competitive advantage stems from your unique sales process versus other factors like product differentiation or market position.

Implementation Strategies That Actually Work

Implementation separates successful AI launches from expensive failures. Even the most sophisticated AI technology fails if your team refuses to adopt it or if it creates more friction than it removes. Effective implementation focuses as much on change management as technical deployment.

Piloting Before Full Rollout

Never launch AI across your entire sales organization simultaneously. Start with a contained pilot that includes willing participants, clear success criteria, and defined timelines. Pilots reduce risk, generate proof points, and identify implementation issues before they impact your full team.

Structure your pilot with these elements:

Select 3-5 enthusiastic participants who represent different experience levels and sales roles

Define a 30-60 day testing window with weekly check-ins and feedback sessions

Establish baseline metrics before the pilot begins so you can measure actual impact

Document everything including unexpected issues, workflow changes, and rep feedback

Create decision criteria for what constitutes success versus failure

Your pilot should test both technical functionality and human factors. Does the AI actually save time? Do reps trust its recommendations? Does it integrate smoothly with existing workflows? Are there edge cases that break the automation?

Training Teams for AI-Augmented Selling

Technology adoption requires more than a single training session and a help document. Your team needs to understand not just how to use new AI tools, but why they matter and how they change daily workflows. This understanding comes through repetition, examples, and seeing tangible results.

Effective training combines multiple formats:

Live demonstrations showing real scenarios from your sales process

Recorded walkthroughs that reps can reference when stuck

Office hours where reps can ask questions and troubleshoot

Success stories from pilot participants

Regular reinforcement in team meetings

Address the "what's in it for me" question explicitly. Reps care about quota attainment, commission checks, and not working weekends. Frame your AI tools in those terms. Show how automation eliminates weekend data entry or helps them reach quota faster, not how it aligns with corporate strategy.

Measuring Results and Iterating

The work doesn't end when you launch AI systems into production. Continuous measurement and refinement separate sustainable improvements from temporary bumps that fade as novelty wears off. Measurement requires comparing current performance against pre-implementation baselines, not against hopes and projections.

Tracking Actual Usage Patterns

Usage metrics reveal whether your AI tools are actually helping or just occupying space in your tech stack. Low adoption rates signal either poor tool design, inadequate training, or misaligned functionality. High adoption without performance improvement suggests your AI is entertaining but not impactful.

Monitor these usage indicators weekly:

Daily active users by role and team

Feature utilization rates across different capabilities

Time spent in AI tools versus manual processes

Ratio of AI suggestions accepted versus rejected

Support tickets and common frustration points

Compare usage patterns between high performers and average performers. If your best reps avoid certain features while struggling reps overuse them, that's valuable intelligence about tool effectiveness. The correlation between AI usage and actual results matters more than usage volume alone.

Optimizing Based on Real Feedback

Your sales team will tell you exactly what's broken if you create safe channels for honest feedback. They experience friction points daily that never surface in executive reviews. Regular feedback loops turn your implementation into a continuously improving system rather than a static deployment.

Feedback Method | Frequency | Purpose |

|---|---|---|

Anonymous surveys | Monthly | Capture honest criticism without political filtering |

Small group sessions | Bi-weekly | Deep dive into specific issues and workflows |

One-on-one interviews | Quarterly | Understand individual adoption barriers |

Usage analytics review | Weekly | Identify patterns that contradict stated feedback |

Act visibly on feedback you receive. When reps report that AI-generated email templates sound robotic, refine the prompts and share the improvements. When they identify a manual step that should be automated, add it to your roadmap with a timeline. Visible responsiveness builds trust and encourages continued participation.

Avoiding Common Launch Failures

Most AI implementations fail predictably. They fall into the same traps that organizations have stumbled into for years with previous technology waves. Understanding these patterns helps you navigate around them rather than learning through expensive mistakes.

Over-Automating Too Quickly

The temptation to automate everything immediately undermines more AI projects than any other factor. Leaders see AI's potential and want instant transformation across every sales activity. This approach overwhelms teams, introduces too many variables to debug effectively, and creates resistance that poisons future initiatives.

Successful launches follow a crawl-walk-run progression. They automate one high-impact workflow completely before expanding to additional areas. This focus allows you to perfect the implementation, build organizational confidence, and demonstrate clear ROI before asking for budget and patience to tackle the next challenge. Research on launching AI tools and their adoption patterns confirms that focused, well-executed launches generate more sustained traction than broad, shallow deployments.

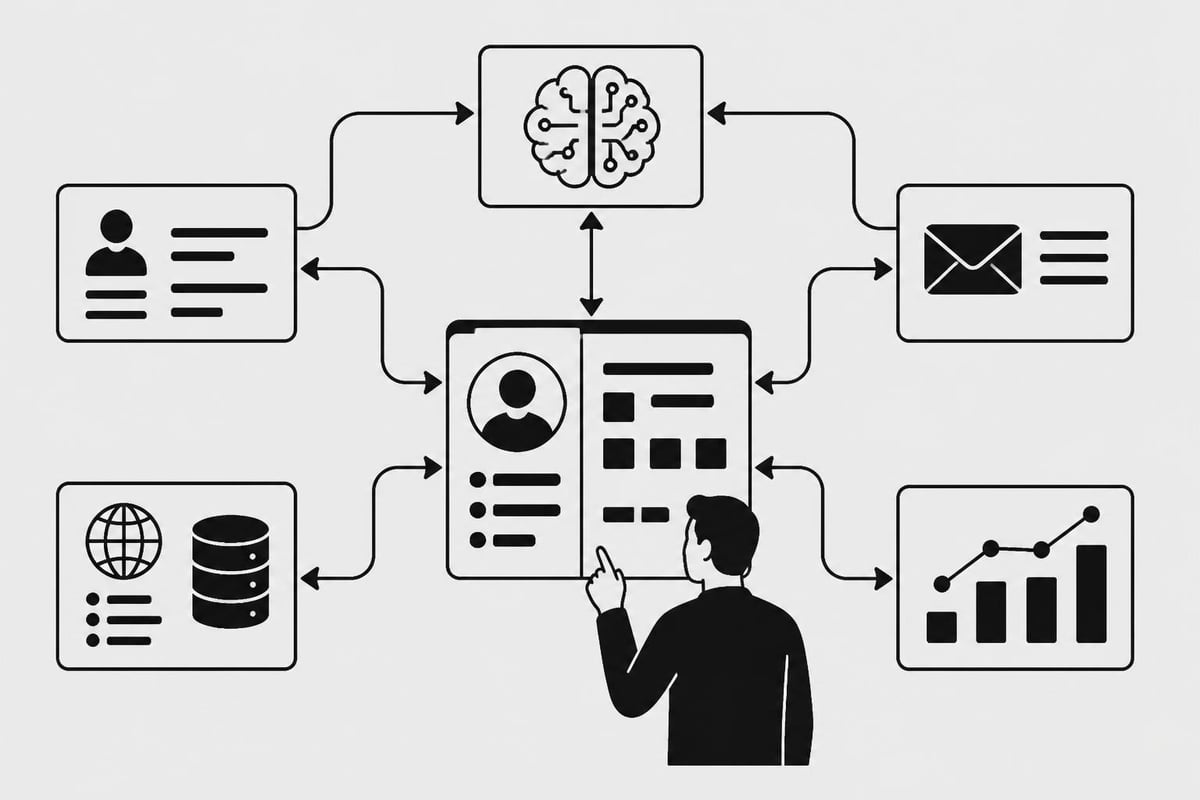

Ignoring Integration Requirements

AI tools don't exist in isolation. They need to pull data from your CRM, enrichment services, email systems, calendar platforms, and communication tools. Poor integration creates data silos, manual data transfer, and inconsistent information across systems. These friction points destroy the efficiency gains that justified your AI investment.

Before you launch AI capabilities, map every integration point:

Where does the AI need to read data and from which systems

Where does it need to write data back and in what format

How often does data need to sync to remain useful

What happens when integration points fail or timeout

Who's responsible for maintaining each connection

Integration complexity scales exponentially with the number of systems involved. Each additional tool multiplies potential failure points. This reality reinforces the value of consolidated sales systems that reduce integration complexity by design rather than adding to it.

Scaling What Works

Once your pilot proves successful and your team shows consistent adoption, scaling requires different considerations than initial implementation. You're no longer testing viability. You're operationalizing a proven system across diverse teams, geographies, and use cases.

Expanding Across Teams Strategically

Different sales teams have different needs, even within the same organization. Enterprise teams selling to Fortune 500 companies operate differently than SMB teams closing transactional deals. Geographic teams face regional compliance requirements and cultural considerations. Product-specific teams develop specialized knowledge and workflows.

Scale in waves that respect these differences:

Second wave: Expand to teams with similar characteristics to your pilot group

Third wave: Adapt to teams with moderate differences, incorporating lessons from wave two

Fourth wave: Tackle teams with significant variations, using accumulated experience

Continuous: Maintain feedback loops from all teams to identify diverging needs

Each wave should include team-specific customization rather than forcing uniform adoption. AI prompts, qualification criteria, and automation rules often need adjustment for different contexts. The impact of AI launches varies significantly based on how well implementations respect specific operational requirements rather than imposing one-size-fits-all approaches.

Building Internal Expertise

Vendor dependencies create long-term risk and slow improvement cycles. As you scale AI capabilities, develop internal expertise that can troubleshoot issues, optimize configurations, and implement incremental improvements without external consultants.

This doesn't mean hiring a full AI engineering team. It means identifying technically-minded sales operations professionals, providing them with appropriate training, and giving them permission to experiment and iterate. Internal experts understand your business context in ways external vendors never will, allowing for faster, more relevant optimization.

Future-Proofing Your AI Investment

Technology evolves rapidly, particularly in AI. Platforms that seem cutting-edge today become limitations tomorrow. Future-proofing means building flexibility into your architecture rather than locking yourself into proprietary systems that restrict your options.

Maintaining Data Portability

Your sales data represents accumulated organizational knowledge. Contacts, interaction history, deal context, and performance patterns have value far beyond any single platform. Ensure you can extract this data in usable formats without vendor cooperation.

Require these data portability features:

Standard export formats like CSV, JSON, or XML for all critical data types

API access that allows programmatic data extraction without manual downloads

Documentation of data schemas and relationships between different record types

No extraction penalties in terms of pricing, throttling, or contractual restrictions

Data portability isn't just about switching vendors. It enables analysis, backup strategies, and integration with future tools you haven't imagined yet. Platforms like Launch's AI-powered development platform demonstrate how quickly new capabilities emerge. Your architecture should accommodate innovation rather than resist it.

Balancing Customization and Maintainability

Heavy customization delivers precise functionality but creates technical debt that becomes expensive to maintain. Each custom integration, unique workflow, or specialized automation requires ongoing attention as underlying platforms evolve. Strike a balance between customization that delivers competitive advantage and standardization that reduces long-term overhead.

Customization Level | Appropriate For | Risk Factors |

|---|---|---|

Heavy Custom | Core competitive differentiators | High maintenance cost, upgrade challenges |

Moderate Custom | Important but not unique processes | Balanced ongoing investment |

Light Custom | Standard workflows with minor tweaks | Minimal technical debt |

Zero Custom | Commodity functions available in platforms | Lost differentiation opportunity |

Reserve heavy customization for processes that directly contribute to your competitive positioning. Use standard configurations for everything else, even if they feel slightly suboptimal. The marginal improvement from perfect customization rarely justifies the accumulated technical debt.

Adapting to Market Changes

Markets evolve. Buyer behaviors shift. Competitive dynamics change. Regulatory requirements emerge. Your AI systems need to adapt continuously rather than remaining static. This adaptability requires both technical flexibility and organizational discipline around regular review cycles.

Building Feedback Mechanisms

Create systematic ways to capture signal about changing market conditions and translate those signals into system improvements. Feedback should come from multiple sources: your sales team encountering new objections, customer success hearing changing priorities, prospects behaving differently, and market intelligence revealing competitor movements.

Establish monthly review sessions that examine:

Win/loss patterns: Are we losing deals for different reasons than six months ago?

Buyer engagement: Have response rates, meeting acceptance, or time-to-decision changed?

Qualification accuracy: Is our AI correctly identifying good-fit prospects or has our ICP shifted?

Competitive landscape: Are new competitors emerging or existing ones changing their approach?

These sessions should drive concrete actions, not just generate discussion. If buyer priorities have shifted, update your qualification criteria and retrain your AI models. If new competitors are changing deal dynamics, adjust your battle cards and competitive positioning within AI-generated content. For teams looking to understand where their current systems need refinement, a comprehensive sales function audit provides the detailed analysis necessary to identify specific adaptation opportunities.

Staying Informed on AI Capabilities

AI technology evolves rapidly. Capabilities that seemed impossible in 2024 became routine in 2025 and will feel primitive by 2027. Staying informed means more than reading vendor newsletters. It requires understanding fundamental AI advancements and translating them into potential applications for your specific sales context.

Follow developments in:

Large language models and their improving ability to understand context and generate relevant responses

Multimodal AI that can process text, voice, images, and video to extract sales intelligence

Agentic AI that can execute multi-step workflows with minimal human supervision

Personalization engines that adapt messaging based on individual prospect behaviors and preferences

Don't chase every new capability. Evaluate innovations against your strategic priorities and existing bottlenecks. The fact that technology can do something doesn't mean it should for your specific situation. Thoughtful adoption beats early adoption when it comes to sustainable competitive advantage.

Successfully launching AI within your sales organization requires more than selecting the right technology. It demands clear-eyed assessment of current reality, strategic prioritization of high-impact opportunities, and disciplined execution that respects both technical requirements and human adoption factors. The companies that thrive in 2026 and beyond will be those that view AI as an ongoing capability to develop rather than a one-time project to complete. If you're ready to build AI-powered sales systems that actually generate results rather than adding complexity, erakraft inc. specializes in creating unified systems that consolidate your tools and help your team focus on closing deals instead of managing software.